Record Consistency Analysis Batch – Puritqnas, Rasnkada, reginab1101, Site #Theamericansecrets

Record Consistency Analysis Batch for Puritqnas, Rasnkada, reginab1101, Site #Theamericansecrets aims to align data provenance, validation, and timing across multiple sources. The approach emphasizes deterministic checks, centralized schemas, and auditable pipelines to ensure reproducible results. By consolidating configurations and applying synchronized timestamps, it mitigates replication challenges and supports transparent decision-making. The framework sets clear expectations for batch-wide integrity, inviting further examination of its controls and outcomes to gauge practical robustness.

What Is Record Consistency, and Why It Matters for Cross-Site Data

Record consistency refers to the uniformity of data across multiple records and sources within a system or across integrated systems. It is essential for reliable cross site data handling, ensuring cross site integrity and enabling trustworthy analyses. When maintained, batch reproducibility improves, reducing discrepancies and facilitating audits. Consistent data practices support transparent decision making and scalable, freedom-filled interoperability across diverse repositories.

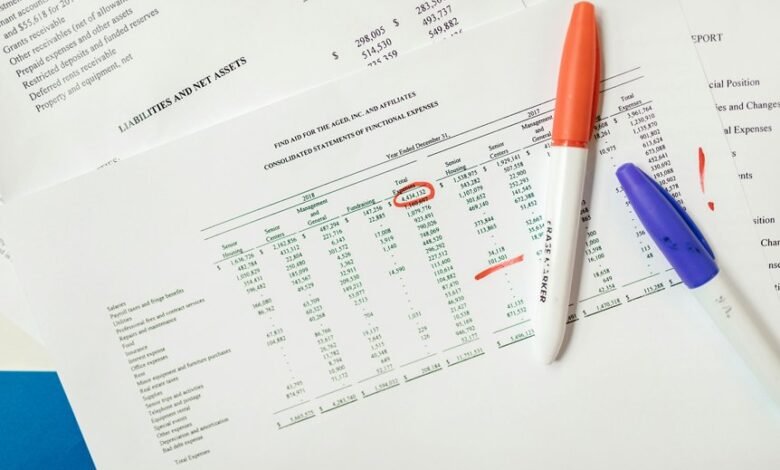

Methodology Spotlight: How We Verify Batch-Wide Integrity

Across batch validations, the process begins with a structured audit of input sources, metadata, and transformation steps to establish a baseline for comparison.

The methodology emphasizes verification consistency through deterministic checks, provenance tracing, and reproducible test runs.

Cross site integrity is maintained via aligned schemas, synchronized timestamps, and centralized anomaly detection, ensuring transparent, auditable batch-wide results without external assumptions.

Benchmarks and Common Pitfalls in Consistency Across Puritqnas, Rasnkada, reginab1101, Site Theamericansecrets

Benchmarks for assessing consistency across Puritqnas, Rasnkada, reginab1101, and Site Theamericansecrets hinge on standardized metrics, reproducible test workflows, and shared data schemas.

The analysis highlights Record inconsistency tendencies, emphasizes batch auditing practices, and scrutinizes cross site replication challenges.

Data normalization strategies mitigate divergence, while attention to drift, versioning, and metadata fidelity ensures transparent, auditable comparability across environments.

Practical Steps for Reproducible Batch Analysis in Your Projects

To implement reproducible batch analysis effectively, teams should establish a disciplined workflow that emphasizes explicit data provenance, consistent preprocessing, and version-controlled configurations. The approach prioritizes rigorous data validation, transparent documentation, and reproducible scripts. Cross site replication is feasible through standardized environments, shared pipelines, and frequent verification. Detectors ensure traceability, while modular components enable scalable, freedom-conscious experimentation with predictable outcomes.

Conclusion

The batch closes with a quiet, unbroken rhythm, each source harmonizing under a shared schema. Yet beneath the calm, a lingering uncertainty threads through timestamps and provenance, reminding readers that replication is never finished. As benchmarks settle and anomalies surface, the workflow stands ready—transparent, version-controlled, auditable. The final frame holds a promise: when every step is documented and reproducible, the true consistency of cross-site data becomes not a claim, but a verifiable conclusion awaiting its next test.